The future of the interaction between man and machines is probably a brain-computer interface (BMI), at least according to a study detailing a wireless portable device that can control a wheelchair or small robot, published by Georgia Institute of Technology, University of Kent and Wichita State University. This kind of scenario is even more futuristic than that of Minority Report, the movie that showed floating holographic screens that were controlled by gestures.

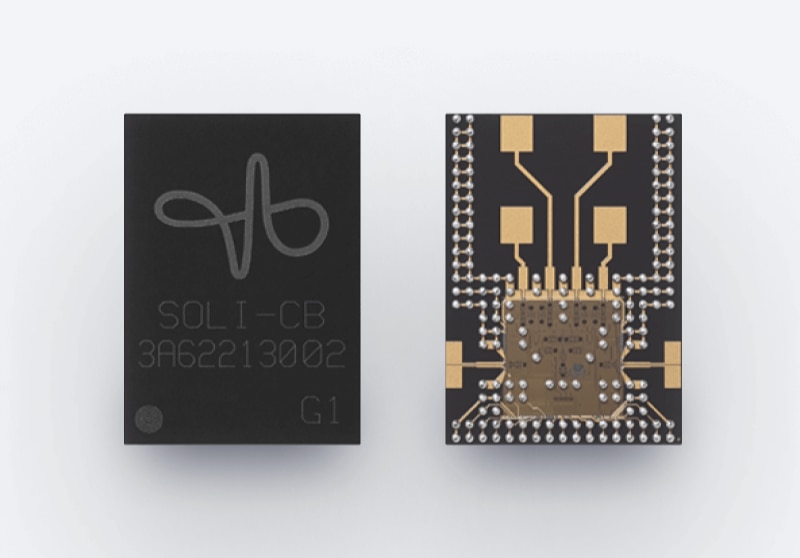

What's real is that Google has just launched a smartphone with a radar which allows gesture control, the Pixel 4. This is called Motion Sense and doesn't come out of the blue. Among Google's advanced technology projects, one of the most discussed in the last years has been Soli, a gesture recognition technology based on a radar which uses high-speed sensors and data-analysis techniques based for example on the Doppler effect. The Pixel 4 is the first commercial device to feature a Soli sensor, which as Google showed during the smartphone launch has been incredibly miniaturized since the first tryouts – in 2015, an alpha Project Soli development kit was announced. Today you can do basic actions with gesture-to-Pixel interaction: skip a song, silence a call, and even say hello to Pikachu (this was one of the most hilarious moments of the Pixel launch, actually). The radar should be so smart to recognize unintentional gestures and also helps out in the face unlock process, which Google says is the fastest available today. Obviously, this is only a start. But combined with the voice control provided via Google Assistant, which is getting better and better with new features announced today, it's possible to think of an interaction that doesn't need any touch, but only remote-control. Obviously, nobody says this would be a better man-machine way of interacting. But for sure Soli is a new one. Only time will say if we'll take advantage of this technology or jump onto something else, even directly a brain-computer interface.